|

In Databricks Runtime temporarily disable ANSI mode to tolerate incorrect overflow. In Databricks SQL temporarily disable ANSI mode to tolerate incorrect overflow. Allowing overflows to be treated as NULL An occasional overfklow that should be tolerated If necessary set to "false" (except for ANSI interval type) to bypass this error. An overflow of a complex expression which can be rewritten

Use a wider numeric to perform the operation by casting one of the operands If necessary set ansi_mode to "false" (except for ANSI interval type) to bypass this error. ('Integer Overflow: ' + Integer.MINVALUE + '/-1 ' +i) Further Work (optional check with your instructor if you need to answer the following questions) For each of the following give the appropriate Java declaration: Number of students at your college. You cannot change the expression and you rather get wrapped results than return an error?Īs a last resort, disable ANSI mode by setting the ansiConfig to false.Įxamples - An overflow of a small numeric

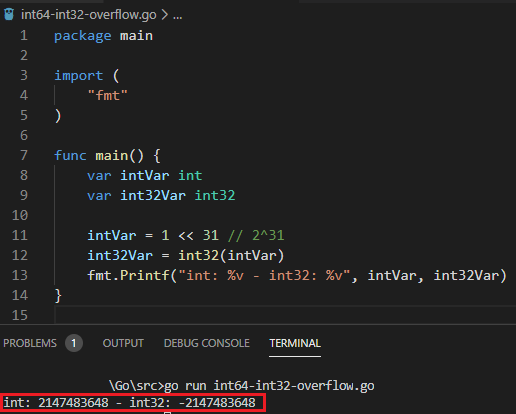

Widen the type by casting one of the arguments to a type sufficient to complete the operation.Ĭhoosing DOUBLE or DECIMAL(38, s) with an appropriate s provides a lot of range at the cost of rounding.Ĭan you tolerate overflow conditions and replace them with NULL?Ĭhange the expression to use the function proposed in alternative. You may also consider reordering operations to keep intermediate results in the desired range. This error is reported in Java, and specifically on Android. The mitigation for this error depends on the cause:Īre the math or any of the input arguments incorrect?Ĭorrect the functions used or the input data as appropriate. Reported as Integer Overflow U5 by bufferoverrun. Other types such as TIMESTAMP and INTERVAL also have a large, but finite range.įor a definition of the domain of a type see the definition for the data type. In many cases math is performed in the least-common type of the operands of an operator, or the least-common type of the arguments of a function.Īdding two numbers of type TINYINT can quickly exceed the types range which is limited from -128 to +127. config: The configuration setting to alter ANSI mode.Īn arithmetic overflow occurs when Azure Databricks performs a mathematical operation that exceeds the maximum range of the data type in which the operation is performed.alternative: Advise on how to avoid the error.message: A description of the expression causing the overflow.If necessary set to “false” to bypass this error. The issue most likely won’t occur when using a byte array, since creating a byte array of size 0x80000000 (or any other negative value) is impossible in the first place. The same issue exists also when using the “compress” functions that receive double, float, int, long and short, each using a different multiplier that may cause the same issue. On the other side, if the result is positive, the “buf” array will successfully be allocated, but its size might be too small to use for the compression, causing a fatal Access Violation error. If the result is negative, a “” exception will be raised while trying to allocate the array “buf”.

Since the maxCompressedLength function treats the length as an unsigned integer, it doesn’t care that it is negative, and it returns a valid value, which is casted to a signed integer by the Java engine. arraycopy( buf, 0, result, 0, compressedByteSize) rawCompress( data, 0, byteSize, buf, 0) īyte result = new byte Due to limitations in computer memory, programs sometimes encounter issues with roundoff, overflow, or precision of numeric variables. Public static byte rawCompress( Object data, int byteSize)īyte buf = new byte

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed